|

|

Advertisement:

|

|

Performance Scaling with HD 5870 |

|

Join the community - in the OCAU Forums!

|

Introduction, Setup, Overclocking

Bottleneck is a term often used loosely when discussing the performance characteristics of PC gaming. Hopefully an explanation of a bottle isnt necessary, but you never know...

Ultimate performance is the liquid that enthusiasts want to gush out of their machine (although Im sure OCAU beer would be grudgingly accepted by most). But there's always something holding performance back, and the bottleneck of a computer system can be any one of several components, depending on the task in question. Map loading in games, for example, is largely dependent on secondary storage (hard drive) speeds. General single-threaded application performance is largely dependent on CPU architecture and frequency. Professional 3D rendering thrives on CPU core count. Databases love lots of fast memory. But how about high resolution gaming?

General opinion has it that the GPU will be the main bottleneck; meaning that upgrading other components such as the CPU or RAM will have a negligible effect on performance. But is that always the case? Questions fly on the OCAU forums from members wondering if purchasing a particular piece of hardware is worth it, given they play X game at Y resolution with Z other hardware.

OCAU is based around overclocking components, so instead of throwing a number of different CPU and GPU models at a set of tests, this article will focus on the effect of overclocking a Phenom II CPU, a Radeon 5870 GPU, and a combination of the two; all at Full HD resolution - 1920x1080. From this we hope to see exactly where the bottleneck for our system is, for various games, and how they respond to overclocking.

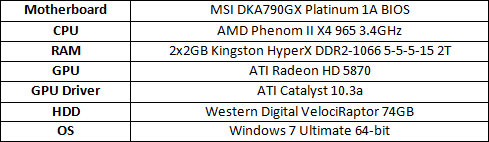

Test system specification and rendering options:

All games were set to the highest possible image quality, with the following exceptions meriting explanation.- Crysis: WARHEAD ran with the Very High (tweaked) setting in the HWBench benchmarking tool, with 16x Anisotropic Filtering (AF) on all tests

- S.T.A.L.K.E.R.: Call of Pripyat used the benchmark applications Ultra preset with Enhanced full dynamic lighting (DX11), HDAO - a new higher quality Screen Space Ambient Occlusion (SSAO) technique -, tessellation and contact hardening shadows all enabled.

Its worth bearing these conditions in mind when comparing to other reviews and benchmarks around the web, as writers will often use an in-game preset for continuity with previous tests.

Overclocking:

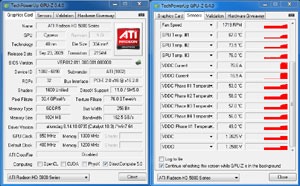

The Radeon 5870 GPU (or any other ATI model) can be overclocked a few different ways. Most easily, ATIs Catalyst suite provides an OverDrive function. This has a pre-determined clock ceiling; presumably to keep thermal properties under control and minimise the chances of an eager newbie frying their expensive GPU.

Flashing the BIOS of the GPU requires a little more research and effort, yet offers the possibility of noticeably higher performance. Like all overclocking, the flip-side is more heat, higher power draw, and more risk of damage to your hardware. This might be old news to most but there are always a few readers that arent aware, so its a good warning to repeat.

The GPUs core voltage was bumped up from 1.1625V to 1.2625V, allowing a relatively conservative 100MHz increase to 950MHz. The memory wasnt spared, getting the same 100mV increase for a total 1.25V, enabling 1350MHz from the retail 1250MHz.

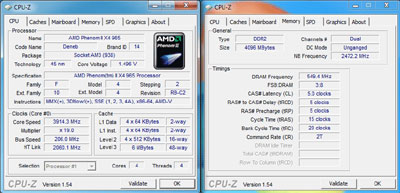

The Phenom II CPU was clocked up to 3.914GHz (19x multiplier with a HTT clock of 206) for an increase of roughly 500MHz, whilst the RAM boosted to 1098MHz with the same 5-5-5-15 timings. The CPUs internal north bridge nudged up to a fairly modest 2.47GHz, compared to the stock 2GHz.

So, with more CPU & GPU cycles per second, a faster L3 cache, higher memory bandwidth & GPU memory bandwidth, what gains can be achieved at high resolution gaming? Is GPU bottlenecking still holding back gamers at Full HD, or is overclocking your CPU just as useful as overclocking your GPU?

|

|

Advertisement:

All original content copyright James Rolfe.

All rights reserved. No reproduction allowed without written permission.

Interested in advertising on OCAU? Contact us for info.

|

|